- Blog

- Home

- Microsoft Lifecam Hd 5001

- Divinity Original Sin 2 Custom Campaign Download

- Fallout Nuka World Armor

- Kisi-kisi Soal Sbdp Sd

- Crysis 3 Soundtrack Download

- Data Lock Code Huawei E5577cs-603

- Arma 2 Operation Arrowhead Free Download

- Ryuichi Sakamoto: Coda Zippshare

- Flames Of War Great War

- Civ 5 Coastal City

- Mount And Blade Ww1

- Heroes Of Might And Magic 4 Cheats

- How To Register Winrar

|

The following are the points for DataStage best practices:. Select suitable configurations file (nodes depending on data volume). Select buffer memory correctly and select proper partition. Turn off Run time Column propagation wherever it’s not required. Taking care about sorting of the data.

Handling null values (use modify instead of transformer). Try to decrease the use of transformer.

Dear sir,As stated above my problem is with the routine Lookup which among others is written by Ascential.I have a job that copies data from a table into a hash file. Now I need to read the contents of this hash file (a particular field) by supplying the key. The value retrieved has to be passed to a job as a parameter. Inspite of the hash file existing clearly, the Lookup routine refuses to acknowledge its presence.

I keep getting the message '.Table Not Found.' I believe this kind of usage requires some kind of pointer creation in Datastage Administrator which links the name of the hash file to the project. However I do not know how carry out this configuration.I would be most obliged if I could get some help in this regard.SincerelyS.

The Components of Modding NFS MW; Download NFS Shift 2 Unleashed; Download NFS MW (2005) v1.3 Torrent; How to Install NFS MW (2005) Mod Loader; Cross Corvette Mod; Mod Ferrari 360 Spider 1.0a; Camera Hack; NFS MW Copter Mover 1.0.

I am attaching a DSX file containing the routine called Lookup incase it helps towards finding a solution. bYou can try these instructions to add such a pointer to the VOC./bTelnet to the DataStage server, change to the DSHOME directory (where the dsenv file is), run the dsenv file, then start the DataStage command line.cd `cat /.dshome`./dsenvbin/dssh/codeNow you should have a prompt.

Log to your project. Case matters.LOGTO Project1/codeCreate VOC entry for the hash file.

Case matters. In this example, 'BrowserLu' is my hash file name. My comments are out to the side of each line.ED VOC BrowserLuNew record.-: I I = insert0001= F F = file type0002= /dwhp/data/wba/hash/BrowserLu path and filename of hash file0003= /dwhp/data/wba/hash/DBrowserLu path and filename of dictionary0004= hit Enter for a blank lineBottom at line 3.-: FI FI = file (to save the entry)'BrowserLu' filed in file 'VOC'./codeYou can enter 'Q' to quit the command line.If you need to delete such a pointer but not delete the hash file itself, you can use the DELETE VOC command.DELETE VOC BrowserLu/codeEric. bYou can try these instructions to add such a pointer to the VOC./bTelnet to the DataStage server, change to the DSHOME directory (where the dsenv file is), run the dsenv file, then start the DataStage command line.cd `cat /.dshome`./dsenvbin/dssh/codeNow you should have a prompt.

Log to your project. Case matters.LOGTO Project1/codeCreate VOC entry for the hash file. Case matters. In this example, 'BrowserLu' is my hash file name. My comments are out to the side of each line.ED VOC BrowserLuNew record.-: I I = insert0001= F F = file type0002= /dwhp/data/wba/hash/BrowserLu path and filename of hash file0003= /dwhp/data/wba/hash/DBrowserLu path and filename of dictionary0004= hit Enter for a blank lineBottom at line 3.-: FI FI = file (to save the entry)'BrowserLu' filed in file 'VOC'./codeYou can enter 'Q' to quit the command line.If you need to delete such a pointer but not delete the hash file itself, you can use the DELETE VOC command.DELETE VOC BrowserLu/codeEric. This last one is what worked for me.SETFILE.Thanks a lot to all of you for your help.

Although my problem is solved I'm not sure I understod all your solutions especially those of you who said that this routine in its original form isn't meant to work with directory based hash files. This is because in all the other projects where it is used the hash files are directory based and the routine exists as attached yesterday. Thus no modification of the 'Open' function to 'Openpath' etc.What I now understand is the SETFILE command takes care of this. I hope I am not mistaken. This last one is what worked for me.SETFILE.Thanks a lot to all of you for your help. Although my problem is solved I'm not sure I understod all your solutions especially those of you who said that this routine in its original form isn't meant to work with directory based hash files.

This is because in all the other projects where it is used the hash files are directory based and the routine exists as attached yesterday. Thus no modification of the 'Open' function to 'Openpath' etc.What I now understand is the SETFILE command takes care of this.

I hope I am not mistaken. My hash files are created using a normal Datastage job wherein data is extracted from (in my case) an Oracle stage and routed to a directory hash file where the path is specified by a parameter. (in my case /datastagetemp1pp/devautres/hash/)Now I find that the dictionary file is very much present in this directory i.e.

As I see, a subdirectory with the name of the hash file (e.g. DIO) is created and thus the files DATA.30 and OVER.30 are stored within this subdirectory while the dictionary file DDIO is stored in the parent directory /datastagetemp1pp/devautres/hash/. On the advice of Ascential technical support, when I ran the SETFILE command I supplied the hash file pathname as the directory containing the two.30 files and thus the subfolder. Its therefore obvious that in this subdirectory the dictionary file wasn't found since the same is stored 1 level up.Is this a mistake?

Should I supply the location name as the parent folder and not the subdirectory? My hash files are created using a normal Datastage job wherein data is extracted from (in my case) an Oracle stage and routed to a directory hash file where the path is specified by a parameter.

(in my case /datastagetemp1pp/devautres/hash/)Now I find that the dictionary file is very much present in this directory i.e. As I see, a subdirectory with the name of the hash file (e.g.

DIO) is created and thus the files DATA.30 and OVER.30 are stored within this subdirectory while the dictionary file DDIO is stored in the parent directory /datastagetemp1pp/devautres/hash/. On the advice of Ascential technical support, when I ran the SETFILE command I supplied the hash file pathname as the directory containing the two.30 files and thus the subfolder. Its therefore obvious that in this subdirectory the dictionary file wasn't found since the same is stored 1 level up.Is this a mistake?

Should I supply the location name as the parent folder and not the subdirectory? On the advice of Ascential technical support, when I ran the SETFILE command I supplied the hash file pathname as the directory containing the two.30 files and thus the subfolder.

Its therefore obvious that in this subdirectory the dictionary file wasn't found since the same is stored 1 level up. Is this a mistake? Should I supply the location name as the parent folder and not the subdirectory?No, it's not a mistake and their instructions are correct. The SETFILE command is perfectly aware of where the dictionary file lives, so that is not the issue.Suggest you recontact Support and see if they can explain the behaviour. Anything 'unusual' about the D file?

Permissions / ownership different, perhaps?-craig. On the advice of Ascential technical support, when I ran the SETFILE command I supplied the hash file pathname as the directory containing the two.30 files and thus the subfolder. Its therefore obvious that in this subdirectory the dictionary file wasn't found since the same is stored 1 level up. Is this a mistake?

Should I supply the location name as the parent folder and not the subdirectory?No, it's not a mistake and their instructions are correct. The SETFILE command is perfectly aware of where the dictionary file lives, so that is not the issue.Suggest you recontact Support and see if they can explain the behaviour. Anything 'unusual' about the D file? Permissions / ownership different, perhaps?-craig. However one piece of information I asked remains unanswered. Is there any online resourse where I can get a list of commands that are executed in Datastage Administrator on a particular project through the 'Command' window?For example:SETFILE: Create a pointerDELETE VOC: To delete an existing pointeretc.As of now i want to see how many hash file pointers are created for a project.Cheers Contacted Datastage support and it appears that the pathname supplied to SETFILE shouldn't contain a slash at the end which was the reason for my error message regarding the dictionary file.

Solved n sorted. Thanks everyone once again. However one piece of information I asked remains unanswered.

Is there any online resourse where I can get a list of commands that are executed in Datastage Administrator on a particular project through the 'Command' window?For example:SETFILE: Create a pointerDELETE VOC: To delete an existing pointeretc.As of now i want to see how many hash file pointers are created for a project.Cheers Contacted Datastage support and it appears that the pathname supplied to SETFILE shouldn't contain a slash at the end which was the reason for my error message regarding the dictionary file. Solved n sorted. Thanks everyone once again. However one piece of information I asked remains unanswered.

Is there any online resourse where I can get a list of commands that are executed in Datastage Administrator on a particular project through the 'Command' window?For example:SETFILE: Create a pointerDELETE VOC: To delete an existing pointeretc.As of now i want to see how many hash file pointers are created for a project.Cheers Contacted Datastage support and it appears that the pathname supplied to SETFILE shouldn't contain a slash at the end which was the reason for my error message regarding the dictionary file. Solved n sorted. Thanks everyone once again.

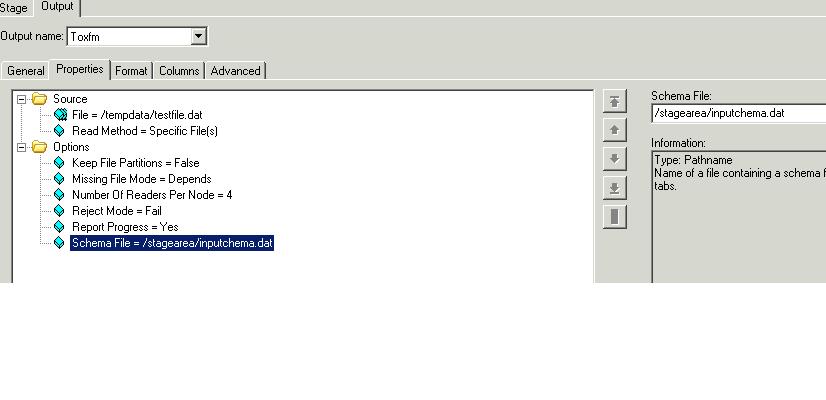

Dynamic Relational Stages (DRS):.Read data from any DataStagestage.Read data from any supportedrelational database.Write to any DataStage stage.Write to any supported relationaldatabase.PeopleSoft-delivered ETL jobs use the DRS stagefor all database sources or targets. This is represented in the Databasegroup as 'Dynamic RDBMS.' When you create jobs, it is advisable touse the DRS stage rather than a specific type such as DB2 becausea DRS will dynamically handle all of PeopleSoft supported databaseplatforms.The following example shows a DRS database stagein a delivered Campus Solutions Warehouse job. Image: DRS Stage Output WindowThisexample illustrates the DRS Stage Output Window.In this example, the table name listed is the sourceof the data that this stage uses.The Columns window shown below enables you to selectwhich columns of data you want to pass through to the next stage.When you click the Load button, the system queries the source tableand populates the grid with all the column names and properties. Youcan then delete rows that are not needed.The following example shows the Columns window. Processing StageDescriptionTransformerTransformer stages perform transformations and conversionson extracted data.AggregatorAggregator stages group data from a single inputlink and perform aggregation functions such as COUNT, SUM, AVERAGE,FIRST, LAST, MIN, and MAX.FTPFTP Stages transfer files to other machines.Link CollectorLink Collectors collect partitioned data and piecesthem together.InterprocessAn InterProcess (IPC) stage is a passive stage whichprovides a communication channel between WebSphere DataStage processesrunning simultaneously in the same job. It allows you to design jobsthat run on SMP systems with great performance benefits.PivotPivot, an active stage, maps sets of columns inan input table to a single column in an output table.SortSort Stages allow you to perform Sort operations.

Transformer StagesTransformer stages enable you to:.Add, delete, or move columns.Apply expressions to data.Use lookups to validate data.Filter data using constraints.Edit column metadata and derivations.Define local stage variables,and before-stage and after-stage subroutines.Specify the order in which thelinks are processed.Pass data on to either anothertransformer stage, or to a target stage.The following is an example of a delivered TransformerStage (TransAssignValues Stage). Creating Transformer StagesYou create a transformer stage by opening the Processinggroup in the palette, selecting the Transformer stage, and clickingin the Diagram window. After creating links to connect the transformerto a minimum of two other stages (the input and output stages), double-clickthe Transformer icon to open the Transformer window.In the example above, two boxes are shown in theupper area of the window representing two links. Transformer stages can have any number of linkswith a minimum of two. Hence, there could be any number of boxes inthe upper area of the window.

Labeling your links appropriately makesit easier for you to work in the Transformer Stage window.The lines that connect the links define how thedata flows between them. When you first create a new transformer,you link it to other stages, and then open it for editing. There willnot be any lines connecting the Link boxes. These connections canbe created manually by clicking and dragging from a particular columnof one link to a column in another link, or by selecting the ColumnAuto-Match button on the toolbar.

What is DataStage?Datastage is an ETL tool which extracts data, transform and load data from source to the target. The data sources might include sequential files, indexed files, relational databases, external data sources, archives, enterprise applications, etc. DataStage facilitates business analysis by providing quality data to help in gaining business intelligence.Datastage is used in a large organization as an interface between different systems. It takes care of extraction, translation, and loading of data from source to the target destination. It was first launched by VMark in mid-90's. With IBM acquiring DataStage in 2005, it was renamed to IBM WebSphere DataStage and later to IBM InfoSphere.Various version of Datastage available in the market so far was Enterprise Edition (PX), Server Edition, MVS Edition, DataStage for PeopleSoft and so on. The latest edition is IBM InfoSphere DataStageIBM Information server includes following products,.

IBM InfoSphere DataStage. IBM InfoSphere QualityStage.

IBM InfoSphere Information Services Director. IBM InfoSphere Information Analyzer. IBM Information Server FastTrack. IBM InfoSphere Business Glossary. ActivitiesSharedUnified user interface. A graphical design interface is used to create InfoSphere DataStage applications (known as jobs).

Each job determines the data sources, the required transformations, and the destination of the data. Jobs are compiled to create parallel job flows and reusable components. They are scheduled and run by the InfoSphere DataStage and QualityStage Director. The Designer client manages metadata in the repository.

Step 1) Create a source database referred to as SALES. Under this database, create two tables product and Inventory.Step 2) Run the following command to create SALES database.db2 create database SALESStep 3) Turn on archival logging for the SALES database. Also, back up the database by using the following commandsdb2 update db cfg for SALES using LOGARCHMETH3 LOGRETAINdb2 backup db SALESStep 4) In the same command prompt, change to the setupDB subdirectory in the sqlrepl-datastage-tutorial directory that you extracted from the downloaded compressed file.Step 5) Use the following command to create Inventory table and import data into the table by running the following command.db2 import from inventory.ixf of ixf create into inventoryStep 6) Create a target table. Name the target database as STAGEDB.Since now you have created both databases source and target, the next step we will see how to replicate it.The following information can be helpful in.Creating the SQL Replication objectsThe image below shows how the flow of change data is delivered from source to target database. You create a source-to-target mapping between tables known as subscription set members and group the members into a subscription.The unit of replication within InfoSphere CDC (Change Data Capture) is referred to as a subscription. The changes done in the source is captured in the 'Capture control table' which is sent to the CD table and then to target table. While the apply program will have the details about the row from where changes need to be done.

It will also join CD table in subscription set. A subscription contains mapping details that specify how data in a source data store is applied to a target data store. Note, CDC is now referred as Infosphere data replication. When a subscription is executed, InfoSphere CDC captures changes on the source database. InfoSphere CDC delivers the change data to the target, and stores sync point information in a bookmark table in the target database. InfoSphere CDC uses the bookmark information to monitor the progress of the InfoSphere DataStage job.

In the case of failure, the bookmark information is used as restart point. Step 5) Now in the same command prompt use the following command to create apply control tables.asnclp –f crtCtlTablesApplyCtlServer.asnclpStep 6) Locate the crtRegistration.asnclp script files and replace all instances of with the user ID for connecting to the SALES database. Also, change ' to the connection password.Step 7) To register the source tables, use following script.

As part of creating the registration, the ASNCLP program will create two CD tables.

No notes for slide. DataStage is a comprehensive tool for the fast, easy creation and maintenance of data marts and data warehouses. It provides the tools you need to build, manage, and expand them. With DataStage, you can build solutions faster and give users access to the data and reports they need.

With DataStage you can: Design the jobs that extract, integrate, aggregate, load, and transform the data for your data warehouse or data mart. Create and reuse metadata and job components. Run, monitor, and schedule these jobs. Administer your development and execution environments. The DataStage client components are: Administrator Administers DataStage projects and conducts housekeeping on the server Designer Creates DataStage jobs that are compiled into executable programs Director Used to run and monitor the DataStage jobs Manager Allows you to view and edit the contents of the repository. Use the Administrator to specify general server defaults, add and delete projects, and to set project properties. The Administrator also provides a command interface to the UniVerse repository.

Use the Administrator Project Properties window to: Set job monitoring limits and other Director defaults on the General tab. Set user group privileges on the Permissions tab. Enable or disable server-side tracing on the Tracing tab. Specify a user name and password for scheduling jobs on the Schedule tab.

Specify hashed file stage read and write cache sizes on the Tunables tab. Use the Manager to store and manage reusable metadata for the jobs you define in the Designer. This metadata includes table and file layouts and routines for transforming extracted data.

Manager is also the primary interface to the DataStage repository. In addition to table and file layouts, it displays the routines, transforms, and jobs that are defined in the project. Custom routines and transforms can also be created in Manager.

The DataStage Designer allows you to use familiar graphical point-and-click techniques to develop processes for extracting, cleansing, transforming, integrating and loading data into warehouse tables. The Designer provides a “visual data flow” method to easily interconnect and configure reusable components. Use the Director to validate, run, schedule, and monitor your DataStage jobs. You can also gather statistics as the job runs.

Define your project’s properties: Administrator Open (attach to) your project Import metadata that defines the format of data stores your jobs will read from or write to: Manager Design the job: Designer - Define data extractions (reads) - Define data flows - Define data integration - Define data transformations - Define data constraints - Define data loads (writes) - Define data aggregations Compile and debug the job: Designer Run and monitor the job: Director. All your work is done in a DataStage project. Before you can do anything, other than some general administration, you must open (attach to) a project. Projects are created during and after the installation process. You can add projects after installation on the Projects tab of Administrator. A project is associated with a directory.

The project directory is used by DataStage to store your jobs and other DataStage objects and metadata. You must open (attach to) a project before you can do any work in it. Projects are self-contained. Although multiple projects can be open at the same time, they are separate environments. You can, however, import and export objects between them. Multiple users can be working in the same project at the same time. However, DataStage will prevent multiple users from accessing the same job at the same time.

Recall from module 1: In DataStage all development work is done within a project. Projects are created during installation and after installation using Administrator. Each project is associated with a directory. The directory stores the objects (jobs, metadata, custom routines, etc.) created in the project. Before you can work in a project you must attach to it (open it). You can set the default properties of a project using DataStage Administrator.

The logon screen for Administrator does not provide the option to select a specific project (unlike the other DataStage clients). The Licensing Tab is used to change DataStage license information. Click Properties on the DataStage Administration window to open the Project Properties window. There are nine tabs. (The Mainframe tab is only enabled if your license supports mainframe jobs.) The default is the General tab. If you select the Enable job administration in Director box, you can perform some administrative functions in Director without opening Administrator. When a job is run in Director, events are logged describing the progress of the job.

For example, events are logged when a job starts, when it stops, and when it aborts. The number of logged events can grow very large. The Auto-purge of job log box tab allows you to specify conditions for purging these events. You can limit the logged events either by number of days or number of job runs.

Use this page to set user group permissions for accessing and using DataStage. All DataStage users must belong to a recognized user role before they can log on to DataStage. This helps to prevent unauthorized access to DataStage projects. There are three roles of DataStage user: DataStage Developer, who has full access to all areas of a DataStage project. DataStage Operator, who can run and manage released DataStage jobs. <None>, who does not have permission to log on to DataStage. UNIX note: In UNIX, the groups displayed are defined in /etc/group.

This tab is used to enable and disable server-side tracing. The default is for server-side tracing to be disabled. When you enable it, information about server activity is recorded for any clients that subsequently attach to the project.

This information is written to trace files. Users with in-depth knowledge of the system software can use it to help identify the cause of a client problem. If tracing is enabled, users receive a warning message whenever they invoke a DataStage client. Warning: Tracing causes a lot of server system overhead. This should only be used to diagnose serious problems. On the Tunables tab, you can specify the sizes of the memory caches used when reading rows in hashed files and when writing rows to hashed files. Hashed files are mainly used for lookups and are discussed in a later module.

You should enable OSH for viewing – OSH is generated when you compile a job. Metadata is “data about data” that describes the formats of sources and targets. This includes general format information such as whether the record columns are delimited and, if so, the delimiting character. It also includes the specific column definitions.

DataStage Manager is a graphical tool for managing the contents of your DataStage project repository, which contains metadata and other DataStage components such as jobs and routines. The left pane contains the project tree. There are seven main branches, but you can create subfolders under each. Select a folder in the project tree to display its contents. Any set of DataStage objects, including whole projects, which are stored in the Manager Repository, can be exported to a file. This export file can then be imported back into DataStage.

Import and export can be used for many purposes, including: Backing up jobs and projects. Maintaining different versions of a job or project. Moving DataStage objects from one project to another. Just export the objects, move to the other project, then re-import them into the new project.

Sharing jobs and projects between developers. The export files, when zipped, are small and can be easily emailed from one developer to another. Click Export>DataStage Components in Manager to begin the export process. Any object in Manager can be exported to a file.

Use this procedure to backup your work or to move DataStage objects from one project to another. Select the types of components to export. You can select either the whole project or select a portion of the objects in the project.

Specify the name and path of the file to export to. By default, objects are exported to a text file in a special format. By default, the extension is dsx. Alternatively, you can export the objects to an XML document. The directory you export to is on the DataStage client, not the server.

To import DataStage components, click Import>DataStage Components. Select the file to import.

Click Import all to begin the import process or Import selected to view a list of the objects in the import file. You can import selected objects from the list. Select the Overwrite without query button to overwrite objects with the same name without warning. Table definitions define the formats of a variety of data files and tables. These definitions can then be used and reused in your jobs to specify the formats of data stores.

For example, you can import the format and column definitions of the Customers.txt file. You can then load this into the sequential source stage of a job that extracts data from the Customers.txt file. You can load this same metadata into other stages that access data with the same format. In this sense the metadata is reusable. It can be used with any file or data store with the same format.

If the column definitions are similar to what you need you can modify the definitions and save the table definition under a new name. You can import and define several different kinds of table definitions including: Sequential files and ODBC data sources. To start the import, click Import>Table Definitions>Sequential File Definitions. The Import Meta Data (Sequential) window is displayed. Select the directory containing the sequential files.

Datastage Enterprise Read Hashed File List

The Files box is then populated with the files you can import. Select the file to import. Select or specify a category (folder) to import into. The format is: <Category><Sub-category> <Category> is the first-level sub-folder under Table Definitions.

<Sub-category> is (or becomes) a sub-folder under the type. In Manager, select the category (folder) that contains the table definition. Double-click the table definition to open the Table Definition window. Click the Columns tab to view and modify any column definitions. Select the Format tab to edit the file format specification.

A job is an executable DataStage program. In DataStage, you can design and run jobs that perform many useful data integration tasks, including data extraction, data conversion, data aggregation, data loading, etc. DataStage jobs are: Designed and built in Designer. Scheduled, invoked, and monitored in Director. Executed under the control of DataStage. In this module, you will go through the whole process with a simple job, except for the first bullet.

In this module you will manually define the metadata. The appearance of the designer work space is configurable; the graphic shown here is only one example of how you might arrange components.

In the right center is the Designer canvas, where you create stages and links. On the left is the Repository window, which displays the branches in Manager. Items in Manager, such as jobs and table definitions can be dragged to the canvas area. Click View>Repository to display the Repository window. The tool palette contains icons that represent the components you can add to your job design. You can also install additional stages called plug-ins for special purposes.

Several types of DataStage jobs: Server – not covered in this course. However, you can create server jobs, convert them to a container, then use this container in a parallel job. However, this has negative performance implications.

Shared container (parallel or server) – contains reusable components that can be used by other jobs. Mainframe – DataStage 390, which generates Cobol code Parallel – this course will concentrate on parallel jobs. Job Sequence – used to create jobs that control execution of other jobs. The tools palette may be shown as a floating dock or placed along a border. Alternatively, it may be hidden and the developer may choose to pull needed stages from the repository onto the design work area.

Datastage Enterprise Read Hashed File Free

Meta data may be dragged from the repository and dropped on a link. Any required properties that are not completed will appear in red. You are defining the format of the data flowing out of the stage, that is, to the output link. Define the output link listed in the Output name box. You are defining the file from which the job will read. If the file doesn’t exist, you will get an error at run time.

On the Format tab, you specify a format for the source file. You will be able to view its data using the View data button. Think of a link as like a pipe. What flows in one end flows out the other end (at the transformer stage).

Defining a sequential target stage is similar to defining a sequential source stage. You are defining the format of the data flowing into the stage, that is, from the input links.

Define each input link listed in the Input name box. You are defining the file the job will write to. If the file doesn’t exist, it will be created. Specify whether to overwrite or append the data in the Update action set of buttons. On the Format tab, you can specify a different format for the target file than you specified for the source file.

If the target file doesn’t exist, you will not (of course!) be able to view its data until after the job runs. If you click the View data button, DataStage will return a “Failed to open ” error. The column definitions you defined in the source stage for a given (output) link will appear already defined in the target stage for the corresponding (input) link. Think of a link as like a pipe. What flows in one end flows out the other end. The format going in is the same as the format going out.

In the Transformer stage you can specify: Column mappings Derivations Constraints A column mapping maps an input column to an output column. Values are passed directly from the input column to the output column. Derivations calculate the values to go into output columns based on values in zero or more input columns. Constraints specify the conditions under which incoming rows will be written to output links. There are two: transformer and basic transformer. Both look the same but access different routines and functions. Notice the following elements of the transformer: The top, left pane displays the columns of the input links.

The top, right pane displays the contents of the stage variables. The lower, right pane displays the contents of the output link. Unresolved column mapping will show the output in red. For now, ignore the Stage Variables window in the top, right pane. This will be discussed in a later module.

The bottom area shows the column definitions (metadata) for the input and output links. Stage variables are used for a variety of purposes: Counters Temporary registers for derivations Controls for constraints.

Two versions of the annotation stage are available: Annotation Annotation description The difference will be evident on the following slides. You can type in whatever you want; the default text comes from the short description of the jobs properties you entered, if any. Add one or more Annotation stages to the canvas to document your job. An Annotation stage works like a text box with various formatting options. You can optionally show or hide the Annotation stages by pressing a button on the toolbar. There are two Annotation stages. The Description Annotation stage is discussed in a later slide.

Datastage Enterprise Read Hashed File Online

Before you can run your job, you must compile it. To compile it, click File>Compile or click the Compile button on the toolbar. The Compile Job window displays the status of the compile. A compile will generate OSH. If an error occurs: Click Show Error to identify the stage where the error occurred. This will highlight the stage in error.

Click More to retrieve more information about the error. This can be lengthy for parallel jobs. As you know, you run your jobs in Director. You can open Director from within Designer by clicking Tools>Run Director. In a similar way, you can move between Director, Manager, and Designer.

There are two methods for running a job: Run it immediately. Schedule it to run at a later time or date. To run a job immediately: Select the job in the Job Status view.

The job must have been compiled. Click Job>Run Now or click the Run Now button in the toolbar. The Job Run Options window is displayed. This shows the Director Status view. To run a job, select it and then click Job>Run Now. Better yet: Shift to log view from main Director screen.

Then click green arrow to execute job. The Job Run Options window is displayed when you click Job>Run Now.

This window allows you to stop the job after: A certain number of rows. A certain number of warning messages. You can validate your job before you run it. Validation performs some checks that are necessary in order for your job to run successfully. These include: Verifying that connections to data sources can be made. Verifying that files can be opened.

Verifying that SQL statements used to select data can be prepared. Click Run to run the job after it is validated. The Status column displays the status of the job run. Click the Log button in the toolbar to view the job log. The job log records events that occur during the execution of a job. These events include control events, such as the starting, finishing, and aborting of a job; informational messages; warning messages; error messages; and program-generated messages.

A typical DataStage workflow consists of: Setting up the project in Administrator Including metadata via Manager Building and assembling the job in Designer Executing and testing the job in Director. Change licensing, if appropriate.

Timeout period should be set to large number or choose “do not timeout” option. Available functions: Add or delete projects. Set project defaults (properties button). Cleanup – perform repository functions.

Command – perform queries against the repository. Recommendations: Check enable job administration in Director Check enable runtime column propagation May check auto purge of jobs to manage messages in director log. You will see different environment variables depending on which category is selected. Reading OSH will be covered in a later module. Since DataStage Enterprise Edition writes OSH, you will want to check this option. To attach to the DataStage Manager client, one first enters through the logon screen.

Logons can be either by DNS name or IP address. Once logged onto Manager, users can import meta data; export all or portions of the project, or import components from another project’s export. Functions: Backup project Export Import Import meta data Table definitions Sequential file definitions Can be imported from metabrokers Register/create new stages. DataStage objects can now be pushed from DataStage to MetaStage.

Job design process: Determine data flow Import supporting meta data Use designer workspace to create visual representation of job Define properties for all stages Compile Execute. The DataStage GUI now generates OSH when a job is compiled.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Home

- Microsoft Lifecam Hd 5001

- Divinity Original Sin 2 Custom Campaign Download

- Fallout Nuka World Armor

- Kisi-kisi Soal Sbdp Sd

- Crysis 3 Soundtrack Download

- Data Lock Code Huawei E5577cs-603

- Arma 2 Operation Arrowhead Free Download

- Ryuichi Sakamoto: Coda Zippshare

- Flames Of War Great War

- Civ 5 Coastal City

- Mount And Blade Ww1

- Heroes Of Might And Magic 4 Cheats

- How To Register Winrar

RSS Feed

RSS Feed